The AI Cognitive Atrophy Crisis (And How to Avoid It)

AI systems researcher and practitioner

- AI methodology

- neuroscience

- cognitive science

- recursive prompting

- MIT study

![]()

Everyone's been arguing about the MIT ChatGPT brain study. Is it proof AI makes you dumber? Should we be scared?

Wrong question.

The study didn't prove that AI makes you dumber. It proved something more specific: how you use AI determines whether your brain gets stronger or weaker.

Here's what everyone missed.

The Data They're Not Talking About

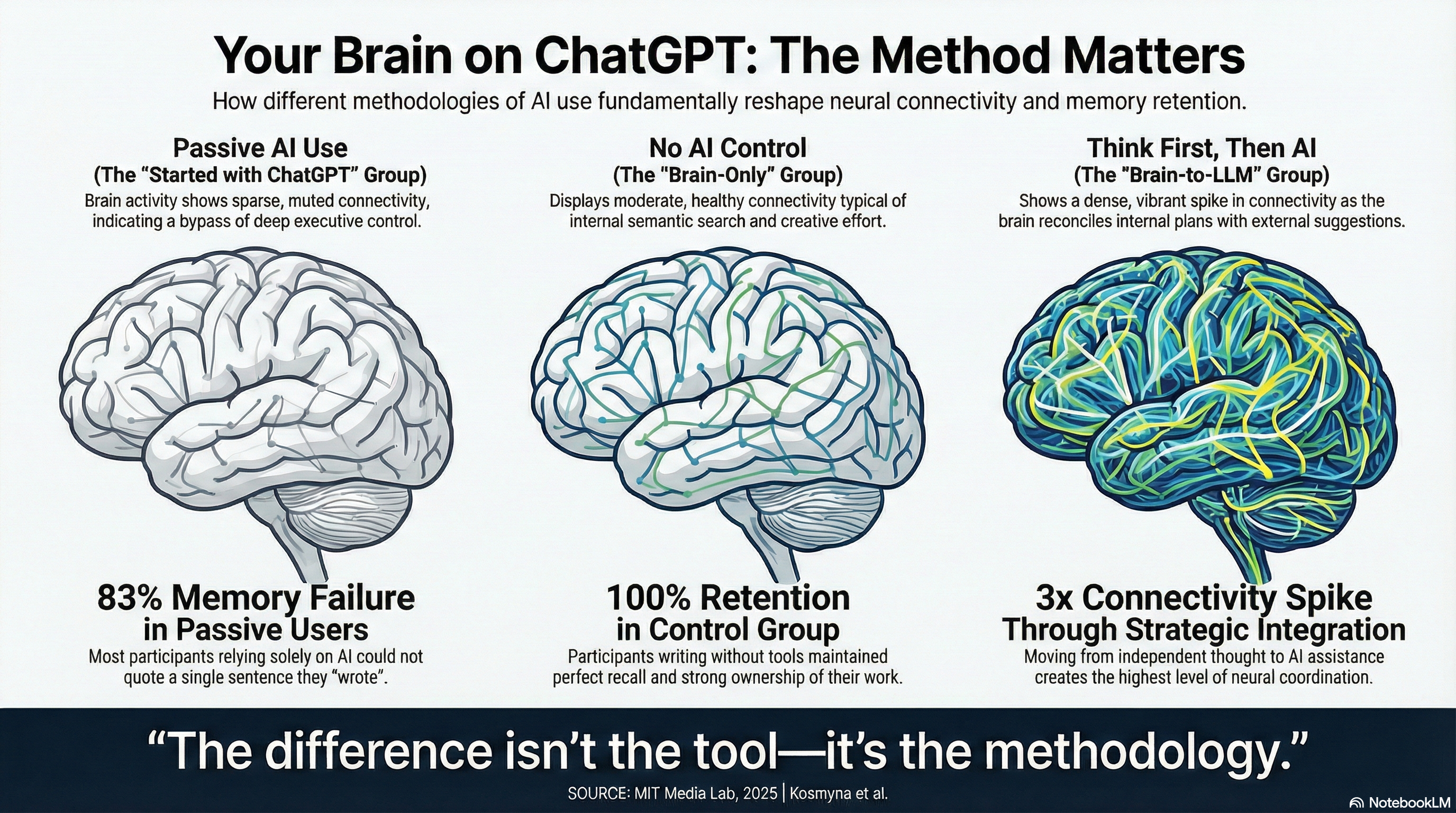

MIT researchers divided people into three groups for an essay-writing task. One group used ChatGPT. One used Google. One used only their brains.

The ChatGPT group showed the weakest brain connectivity patterns. Their frontal theta activity, linked to working memory and executive control, dropped significantly. Their alpha networks, responsible for internal attention and semantic processing, showed reduced engagement.

Here's the disturbing part: 83.3% of AI users couldn't correctly quote from the essay they had just written. Only 11% of non-AI users failed the same test.

But here's what makes it worse: 94.4% of those AI users were satisfied with their work. They felt productive. They felt like they had done good work.

Yet when asked about ownership, only about half claimed their essays were truly theirs. The rest reported partial ownership or felt conflicted about whether the work was really theirs at all.

Their confidence was high. Their sense of authorship was fragmented. Their actual retention was nearly zero.

Your Brain Is Physically Changing

The AI users showed significantly weaker frontal theta connectivity. That's the brain activity associated with deep memory consolidation and executive function. The circuits responsible for actually thinking about what you're doing went dormant.

They also showed reduced alpha network connectivity. That's the activity tied to internal attention and semantic processing. The brain regions that help you understand and integrate information weren't fully engaged.

The result wasn't just forgetfulness. It was something the researchers called "psychological dissociation."

You become the manager who signs off on work without knowing what it actually says. You feel ownership, but your brain never processed the content. You're productive in output, but absent in cognition.

The essays produced by AI users were "statistically homogeneous." They showed significantly less deviation from each other. Human teachers could identify AI-assisted work instantly — not because it was wrong, but because it was "soulless" and "empty."

You aren't just losing your unique voice when you use AI passively. You're physically rewiring your brain to process information at a surface level. You're becoming shallower.

Here's what bothers me most about this study: it happened after just one hour of use.

But There's Another Group

Here's the part that didn't make headlines.

In the fourth session, researchers did something interesting. They took people who had spent three sessions writing essays with zero AI assistance and asked them to use ChatGPT for the first time.

The result wasn't just different. It was dramatic.

These users didn't show the weak connectivity patterns of the original ChatGPT group. Instead, their brains showed a network-wide spike across all frequency bands:

- Delta band activity jumped nearly 3x (from 0.637 to 1.948)

- Theta band, responsible for memory and executive control, jumped from 0.394 to 1.087

- Beta and alpha bands showed similar increases

Their brains didn't go quiet. They lit up.

Same AI tool. Opposite effect.

Why This Happened

The researchers called it "integration overhead."

Because these users had already formed their own ideas in previous sessions, they couldn't just accept ChatGPT's output passively. Their brains had to actively reconcile the AI's suggestions against their own pre-existing thoughts.

They had to judge. Filter. Integrate. Decide what to keep and what to reject.

The original ChatGPT group? They just accepted the output. Low brain connectivity.

This group? They had to fight with it. High brain connectivity.

The difference wasn't the technology. It was what happened before they used it.

When you bring your own thinking to AI, your brain has to work harder to integrate the tool. The "cognitive load" everyone fears? It's actually cognitive engagement. And engagement is what keeps your brain from atrophying.

The Real Divide

I see this split constantly. People reach out confused about why their AI outputs feel generic or why they're unsatisfied with what they're getting. They show me their workflows.

The pattern is always the same.

They start with AI. They ask ChatGPT to write something, accept the first output, and move on. They do this dozens of times a day. Each time, their brain learns it doesn't need to engage. Each time, the circuits for deep processing get a little weaker.

And each time, they feel productive. The satisfaction is real. The cognitive loss is invisible.

But the people who succeed with AI — the ones who don't show decline — they do something different. They don't start with AI. They start with their own thinking first.

The irony is brutal. We adopt AI to get smarter. To work faster and think better. But many of us are using it in a way that makes us demonstrably dumber.

The MIT study isn't an outlier. It's measuring what's already happening to millions of people.

But it also showed the way out. The researchers concluded: "Strategic timing of AI tool introduction following initial self-driven effort may enhance engagement and neural integration."

Translation: If you think first, then use AI strategically, your brain stays engaged. Maybe even gets stronger.

The question isn't whether to use AI. It's when and how.

The Hidden Divide

But here's what the study doesn't tell you. And what most of the coverage completely missed.

Not everyone using AI is experiencing cognitive atrophy.

There's a split happening that's invisible in the headlines. Some people use AI extensively and get sharper. Others use it the same amount and get duller.

The MIT study captured this accidentally. But other research has been tracking it deliberately.

The difference isn't obvious at first. But over time, the gap becomes impossible to ignore.

And it traces back to something fundamental about how we learn.

Two Models, Two Outcomes

I wrote about this pattern in an earlier piece on the hidden divide reshaping our workforce. Philosopher David Deutsch describes two ways of thinking about the mind. He calls one the "bucket model." The other is what I call the error-correction model.

The Bucket Model treats your mind like a container. Learning means filling it with correct answers. Intelligence means having the right information stored away. When you encounter a problem, you pour in the solution.

This is how most of us were educated. Memorize the facts. Repeat them on the test. Get the grade.

The Error-Correction Model treats your mind differently. It's not a container. It's a generator of explanations that must be tested, challenged, and refined. Learning means improving your ability to detect and fix errors. Intelligence means building better processes for thinking.

I began to learn this model in college when a history professor handed us primary sources instead of textbooks. We had to evaluate competing accounts. We had to spot contradictions. We had to build our own understanding from messy, conflicting evidence.

It changed how I think about everything. Since then I have continued learning and practicing critical thinking techniques.

Here's why this matters for AI: these two models produce completely different behaviors when you interact with large language models.

![]()

Pattern A: Passive Consumption

If you think in the bucket model, AI looks like the perfect oracle. It has all the answers. Your job is simple: ask the question, accept the answer, move to the next task.

The workflow is: Prompt → Accept → Move On.

No iteration. No verification. No real engagement with what the AI produced.

This is what the MIT study measured. This is the group that showed weaker frontal theta connectivity. This is the group that couldn't remember what they had just written.

Their brains adapted by offloading the work. Why engage deeply when the AI has already given you the answer?

The problem isn't laziness. The problem is the model. If you believe the AI is giving you correct information to pour into your bucket, then questioning it feels like wasted effort.

But your brain doesn't distinguish between "efficiently getting answers" and "not thinking." It just sees that the circuits for deep processing aren't needed anymore. So it prunes them.

The result is cognitive debt. You get faster at producing output. But you get worse at actually thinking.

Pattern B: Active Collaboration

If you think in the error-correction model, AI looks completely different. It's not an oracle. It's a thinking partner that makes mistakes and needs correction.

The workflow is: Probe → Evaluate → Refine → Verify.

You don't accept the first output. You generate multiple possibilities and compare them. You choose a direction deliberately. You iterate until the result matches what you actually need. You check the work.

This keeps your executive functions engaged. Your frontal theta circuits stay active because you're constantly making decisions. Your alpha networks stay connected because you're integrating the AI's output with your own understanding.

You're thinking with the AI. Not sleeping while it works.

This is the group that doesn't show cognitive atrophy. Some show cognitive gains.

Here's the surprising part: the people who are best at this aren't who you'd expect.

The Neurodivergent Advantage

Student T had dyslexia and struggled with traditional writing. Her GPA was 1.85.

Then she started using AI deliberately. She used it to structure her lateral thinking into linear formats. She used it for scaffolding, not replacement. She critically evaluated every suggestion against her own understanding and course objectives.

Her GPA rose to 3.35. (Mittler, S., 2025. Harnessing Generative AI to Overcome Executive Dysfunction)

Analysis of 55,000+ Reddit posts showed the same pattern. ADHD and autistic users reported overwhelmingly positive experiences with AI (Carik et al., 2025). They naturally developed strategies that match what the MIT study shows works: explicit structure, clear boundaries, active oversight.

They treat AI as what Matt Ivey calls a "cognitive partner" with defined layers. They use what he describes as the "Cognitive Handshake": a 10-80-10 split where they provide the initial spark through voice input (preserving lateral thinking), let AI handle the linear structuring, then verify everything as the final step. (Ivey, M., 2025. The Cognitive Partner Model)

Voice input forces you to think the thought before you delegate the writing. It keeps the spark human. The verification step keeps you engaged as the judge, not just the consumer.

This isn't theoretical. It's what successful users actually do.

Formalizing What Works

I've spent months analyzing my own AI interactions. I examined 441 conversation threads. I tracked 1,980 individual prompting moves. I identified patterns in what worked versus what failed.

The successful interactions followed specific structures. I've formalized these into what I call Recursive Prompting — a systematic approach to working with AI that maintains cognitive engagement while leveraging AI's capabilities.

You can find the complete methodology and 16 ready-to-use templates in my whitepaper on GitHub. It breaks down 13 distinct techniques across 20 recurring patterns.

The core principle is simple: you maintain integration overhead. You force your brain to reconcile AI output with your own thinking. You create the conditions that caused the Brain-to-LLM group's connectivity to spike.

This isn't about prompt engineering. It's about process engineering. It's about structuring your interactions so your brain has to stay engaged.

When I measured my own results using these techniques versus single-shot prompting, I saw approximately 34% improvement in output quality. More importantly, I maintained cognitive control. I never felt like the AI was thinking for me. I felt like I was thinking better with AI's help.

The Two-Tier Future

The researchers are clear about the implications. As I wrote earlier exploring this divide, we're heading toward a split workforce.

Tier 1: People who use error-correction methods with AI. They maintain cognitive engagement. Their brains adapt by integrating the tool as an extension of their thinking. They get sharper over time.

Tier 2: People who use bucket-model approaches with AI. They offload cognitive work. Their brains adapt by pruning the circuits they no longer use. They get shallower over time.

This gap will widen. Every hour spent using AI the wrong way compounds the problem. Every interaction trains your brain either toward engagement or toward passivity.

The AI divide isn't about access to technology. It's about access to methodology.

What You Can Do

Your brain is adapting to AI right now. The only question is whether you're adapting deliberately.

The MIT study proves that cognitive atrophy isn't inevitable. The Brain-to-LLM group showed it's possible to use AI in ways that increase brain connectivity rather than decrease it.

The key is integration overhead. You need to bring your own thinking to the interaction. You need to judge, filter, and reconcile AI output against your own ideas. You need to maintain the cognitive load that keeps your executive functions engaged.

I've built a complete system for doing this: the Recursive Prompting methodology, with 13 techniques and 16 ready-to-use templates, available as a whitepaper on GitHub.

The Antidote to Cognitive Atrophy

Outsourcing your thinking to generic, chat-based AI accelerates cognitive atrophy.

I build specialized research pipelines designed to do the opposite: they handle the scraping, aggregation, and synthesis in the background so you can focus on high-level analysis. Built to amplify human intelligence, not replace it.

The autonomous briefing system running this site is the live version of that architecture. If you want to deploy a dedicated research engine for your own business or project, here is how I build them.

See build scopes and pricing →

Get the methodology, not just the conclusions

Subscribe on Substack

The posts here are the finished argument. Subscribe for the reasoning behind it — the edge cases, the things that didn't fit neatly, and work in progress before it's ready to publish.

Subscribe on Substack →